We're sharing our abstract and references here, in support of our poster at the DH2024 conference.

Treasures on an island? Challenges for integrating volunteer and AI-enriched metadata into GLAM systems

Digital Humanities 2024 conference, August 2024

Mia Ridge (https://orcid.org/0000-0003-3733-8120), Samantha Blickhan (https://orcid.org/0000-0002-3775-5744) , Meghan Ferriter (https://orcid.org/0000-0003-1470-2655)

There's a long history of successful crowdsourcing projects around the world, many presented at previous DH conferences. However, many of these projects struggle with a critical, final aspect of their project: integrating data created or enriched by online volunteers into collections management systems and discovery interfaces. Early signs indicated that many projects using machine learning / AI tools to enhance or create data would face the same issues. For example the work described in Kasprzik (2023) on transferring results from applied research in automated subject indexing to productive services, and Krc and Schlaack (2023) on the complexities of building and sustaining an automated workflow within a library.

Europeana defines 'enrichments' of metadata as 'data about a cultural heritage object … that augment, contextualise or rectify the authoritative data made available by cultural heritage institutions' (2023). Enrichments include identifying and linking entities to vocabularies, creating or correcting transcriptions or metadata, and annotating items with additional information and links. However, managing enrichments while being transparent about their origins is a complex problem.

Sadly, the potential for volunteer- or AI-enriched data to increase the discoverability of collections is often limited by the affordances of the documentation and collections management systems (CMS) used by galleries, libraries, archives and museums (GLAMs). We present original research on the extent to which enrichment projects have successfully integrated resulting data into core systems, and the factors in the success or failure of this work.

Context: challenges for AI and machine learning in GLAMs

The role of 'human in the loop' processes for quality checking the results of machine learning-generated (ML, or AI if you prefer the marketing term) content such as tags, classifications, labels, transcriptions and descriptions of collection items was an underlying theme of presentations at AI4LAM's November 2023 Fantastic Futures conference.[1] Other recurring topics included presenting potentially less accurate enriched data responsibly; and the tension between GLAM desires to share accurate and authoritative information vs. the potential to generate more, albeit less-accurate, metadata at scale. But perhaps the biggest elephant in the room was the gap between pilots that demonstrate the ability of ML to generate relevant and reasonably accurate metadata, and the work of operationalising ML across entire collections and integrating enriched data into public catalogues and discovery systems. If these issues are not addressed, the impact of ML on collections discovery may be limited.

Existing GLAM workflows and CMS were designed for expert internal cataloguers using precise standards – as earlier generations working with user-generated content or the interpretation of collections by community members found in the noughties.[2] These systems need adapting or extending to ingest and manage data produced through non-traditional methods such as crowdsourcing or ML.

Our research: where possibility meets responsibility

We identified the issues and tensions above in a White Paper (Ridge, Blickhan, and Ferriter 2023) that provided recommendations for the future of crowdsourcing in cultural heritage and the digital humanities,[3] presented at the Digital Humanities 2023 conference in Graz. The research we conducted for this poster is inspired by those challenges and recommendations, and by developments in the AI4LAM and Collections as Data movements (Padilla et al. 2023).

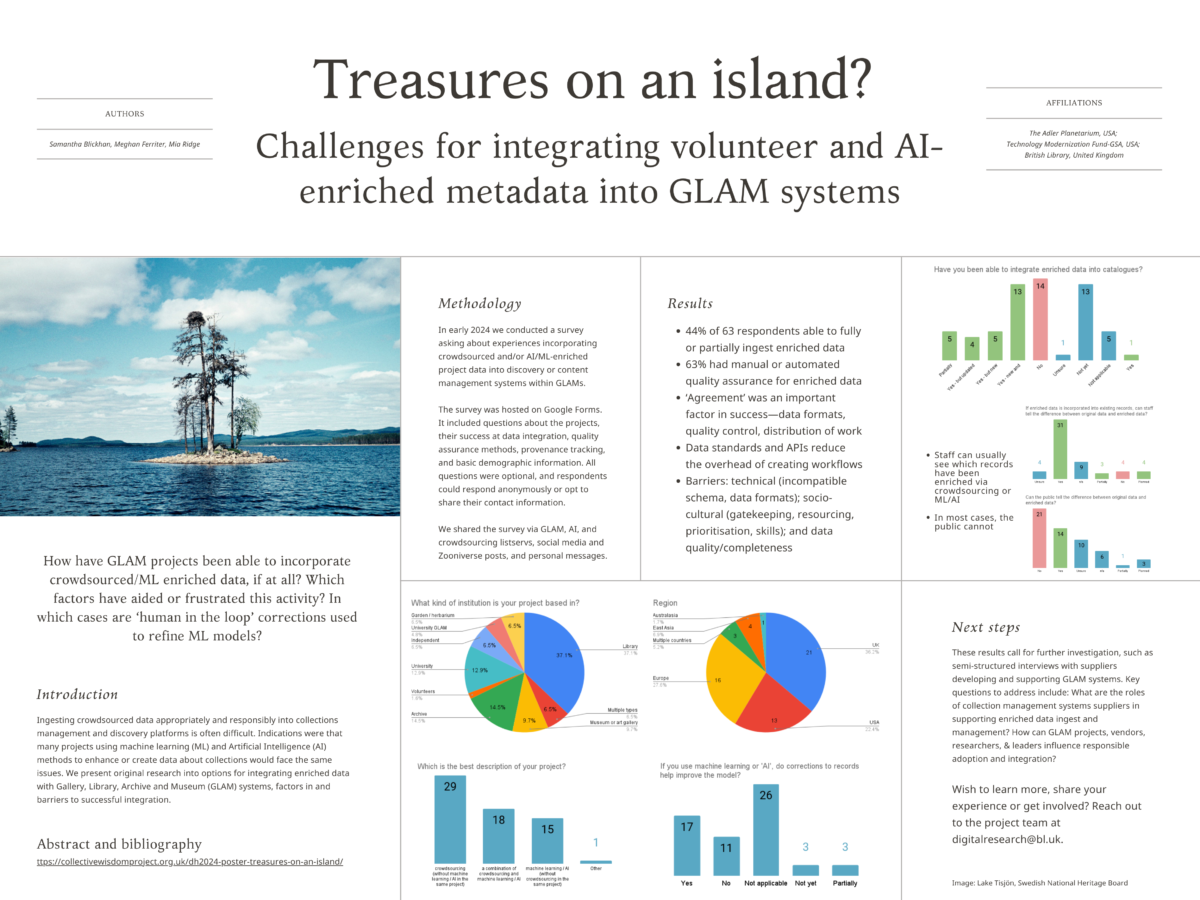

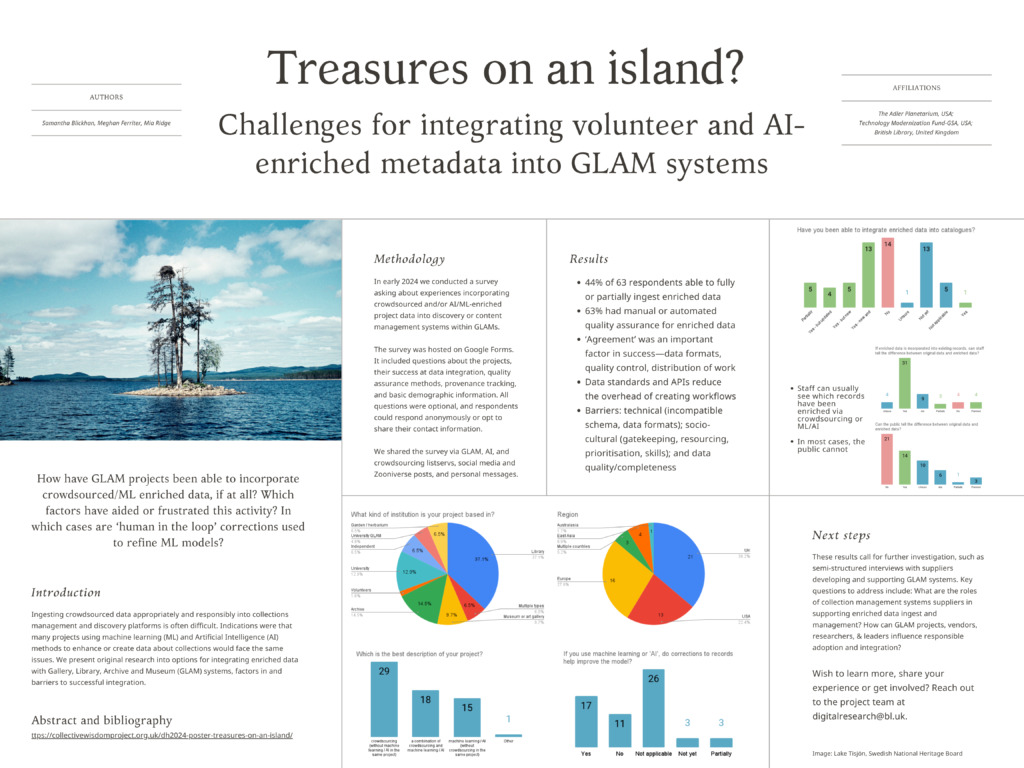

In this poster, we share original research into how and to what extent GLAMs have been able to incorporate crowdsourced and ML / AI-enriched data into their collections or other core systems, including the extent to which the provenance of the enriched data is transparent.[4] Specifically, we will present the results of a public survey for GLAMs inviting them to share how they (as projects, organisations, systems) have been able to incorporate externally-enriched data, if at all. We distributed the survey via mailing lists, social media and through personal contacts, attempting to reach as many people as possible in the international community of practice around crowdsourcing and machine learning in GLAMs.

Our survey[5] asked questions about the project including title, start / end dates, links; whether it used crowdsourcing, machine learning or both to enrich data; factors in their ability or failure to ingest new or enriched data; quality assurance methods; its ability to record and/or display the provenance of enriched information; and information about the individual responding and their organisation. We asked how and where systems capabilities (including organisation systems of record, crowdsourcing projects, and/or vendor systems creating ML-enriched data) influenced project design, public engagement, and data transformation efforts, and any additional 'middleware' systems they have developed to meet their needs. As we reflect on over a decade of exploring questions of quality assurance for crowdsourcing, we apply this line of thinking to the quality of ML-enriched data. How many systems support some form of 'human in the loop' correction, quality assurance or modification of enriched data? When and how do corrections help train the underlying algorithm? Our questions about technical platforms used in projects, and conversations with the suppliers of commercial and open source CMS and GLAM systems provide some insights into questions of transparency, responsibility, and their technical capability and appetite for enabling the integration of enriched data.[6]

Conclusion

Building on the recommendations in our Collective Wisdom White Paper for an inherently collaborative and interdisciplinary future for crowdsourcing and AI, the need for spaces for exchange, the role of sociotechnical critique, and a commitment to surfacing and sharing knowledge, our current research makes a strong contribution to the fields of digital humanities and digital cultural heritage. We hope it will enable organisations with scientific, historical and cultural collections to plan methods for integrating enriched data into collections systems in line with their values and the needs of their audiences.

Ultimately, this project focuses on enacting values and shared responsibilities across collaborative domains, and aligns well with the conference themes. The DH2024 conference is an ideal event for constructive dialogue with scholars and practitioners working on related topics, and we are certain it will lead to further collaborations.

Bibliography

Averkamp, Shawn, Kerri Willette, Amy Rudersdorf, and Meghan Ferriter. 2021. ‘Humans-in-the-Loop Recommendations Report’. LC Labs, Library of Congress. https://labs.loc.gov/static/labs/work/reports/LC-Labs-Humans-in-the-Loop-Recommendations-Report-final.pdf.

Collections Trust, Museums, Libraries, Archives (MLA) London, Caroline Reed, and Heather Lomas. 2009. ‘Revisiting Museum Collections (3rd Edition)’. Collections Trust. https://collectionstrust.org.uk/wp-content/uploads/2016/10/Revisiting-Museum-Collections-toolkit.pdf.

Europeana Initiative. 2023. ‘Enrichments Policy for the Common European Data Space for Cultural Heritage’. Europeana Initiative. https://pro.europeana.eu/post/enrichments-policy-for-the-common-european-data-space-for-cultural-heritage.

Kasprzik, Anna. 2023. ‘Automating Subject Indexing at ZBW: Making Research Results Stick in Practice’. LIBER Quarterly: The Journal of the Association of European Research Libraries 33 (1). https://doi.org/10.53377/lq.13579.

Krc, Matthew, and Anna Oates Schlaack. 2023. ‘Pipeline or Pipe Dream: Building a Scaled Automated Metadata Creation and Ingest Workflow Using Web Scraping Tools’. The Code4Lib Journal, no. 58 (December). https://journal.code4lib.org/articles/17932.

Padilla, Thomas, Hannah Scates Kettler, Stewart Varner, and Yasmeen Shorish. 2023. ‘Vancouver Statement on Collections as Data’, September. https://doi.org/10.5281/zenodo.8342171.

Reed, Caroline. 2013. ‘Is Revisiting Collections Working? Summary Report’. Paul Hamlyn Foundation. http://ourmuseum.org.uk/wp-content/uploads/Is-Revisiting-Collections-working_summary.pdf.

Ridge, Mia, Samantha Blickhan, and Meghan Ferriter. 2023. ‘Recommendations, Challenges and Opportunities for the Future of Crowdsourcing in Cultural Heritage: A White Paper’. July 13. https://doi.org/10.5281/zenodo.8211355.

———. 2024. ‘Survey Questions: Challenges for Integrating Volunteer and AI-Enriched Metadata into GLAM Systems’. https://collectivewisdomproject.org.uk/wp-content/uploads/Challenges-for-integrating-volunteer-and-AI-enriched-metadata-into-GLAM-systems-Google-Forms.pdf

Ridge, Mia, Samantha Blickhan, Meghan Ferriter, Austin Mast, Ben Brumfield, Brendon Wilkins, Daria Cybulska, et al. 2021. The Collective Wisdom Handbook: Perspectives on Crowdsourcing in Cultural Heritage. Community Review edition. https://doi.org/10.21428/a5d7554f.1b80974b.

Ridge, Mia, Meghan Ferriter, and Samantha Blickhan. 2024. ‘Survey: Integrating Volunteer and AI-Enriched Metadata into Collections Systems’. Collective Wisdom (blog). 20 March 2024. https://collectivewisdomproject.org.uk/survey-integrating-volunteer-and-ai-enriched-metadata-into-collections-systems/.

[1] https://ff2023.archive.org/

[2] Similar issues with integrating information from relevant communities into collections systems were found by advocates of the Revisiting Collections methodology, which was designed as a 'framework for embedding new understanding and perspectives … directly within the museum or archive’s collection knowledge management system', incorporating the 'wealth of new understanding and expertise' that communities could bring to the interpretation of collections (Collections Trust et al. 2009). Then, as now, issues were both technical – access to documentation systems for relevant staff and the need to modify those systems to accommodate new sources of data – and cultural – 'resistance in principle to compromising the objectivity and authority of the catalogue by adding external voices' (Reed 2013).

[3] The project (https://collectivewisdomproject.org.uk/) brought together nearly 20 practitioners and researchers to write a handbook on crowdsourcing in cultural heritage in 2021 (Ridge et al. 2021).

[4] Europeana (2023) recommends that enrichments have transparent provenance, are interoperable and reusable, encourage diversity and inclusion in participation, and are mindful of environmental sustainability.

[5] Available online at (Ridge, Blickhan, and Ferriter 2024), with further information for potential participants available at (Ridge, Ferriter, and Blickhan 2024)

[6] For example, see 'Humans-in-the-Loop Recommendations Report', (Averkamp et al. 2021).